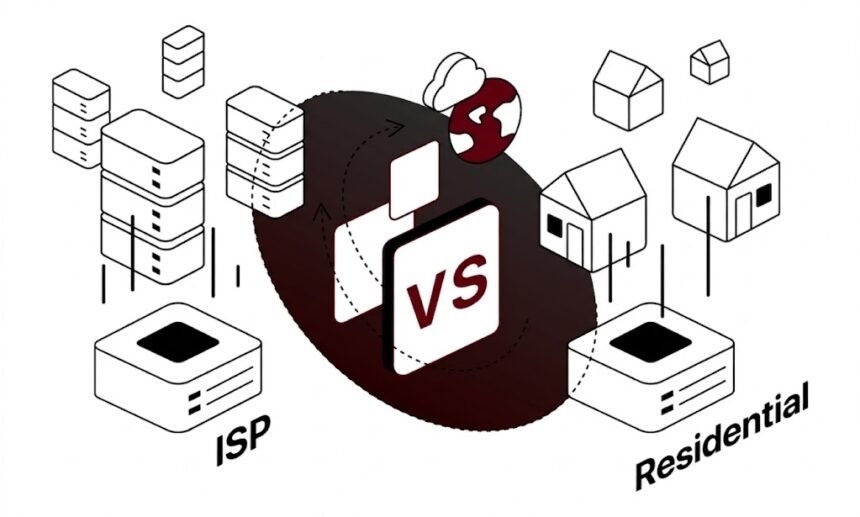

Most comparisons of data center and residential proxies stop at surface-level differences – one is faster, the other looks more legitimate. That framing is too simplified for production environments where your scraping infrastructure, ad verification pipeline, or research automation lives or dies by proxy selection quality.

The technical reality is more nuanced. The right proxy type isn’t determined by a feature checklist; it’s determined by the interaction between your request patterns, target website defenses, acceptable cost-per-request ratios, and geographic distribution requirements. Getting that decision wrong means paying too much, burning IPs at scale, or producing incomplete datasets.

This guide breaks down the architectural differences between data center proxies and residential proxies with enough precision to make an informed infrastructure decision.

The Infrastructure Behind Each Proxy Type

Data center proxies originate from commercial server infrastructure – colocation facilities, cloud providers, and dedicated hosting environments. The IP addresses belong to autonomous systems (ASes) registered to hosting companies like OVH, Hetzner, or AWS. Because they’re assigned to known infrastructure operators, ASN lookups immediately reveal their origin. A website querying MaxMind, ip-api, or similar services will see ORG fields like “AS16509 Amazon.com, Inc.” rather than an ISP name.

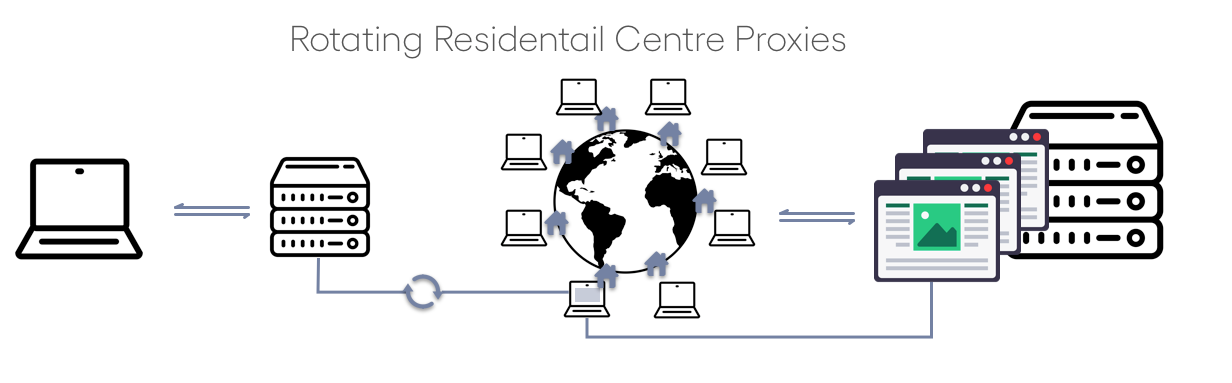

Residential proxies, by contrast, route traffic through IP addresses assigned to actual end users by consumer ISPs – AT&T, BT, Deutsche Telekom, Jio, and thousands of others. The ASN resolves to a telecom operator, the IP has a residential assignment type, and behavioral signals match normal consumer browsing patterns. This distinction has real consequences for how anti-bot systems classify incoming requests.

Mobile proxies represent a third category worth understanding: they use IPs assigned to mobile network operators, which carry even higher trust scores due to the dynamic nature of mobile IP allocation. However, they introduce higher latency variance and typically cost more per GB.

How Anti-Bot Systems Distinguish Between Them

Modern bot detection doesn’t rely solely on IP reputation. Platforms like Cloudflare, Akamai Bot Manager, and PerimeterX run multi-signal fingerprinting that includes ASN type, IP history, request cadence, TLS fingerprint, and behavioral entropy. Data center IPs trip multiple signals simultaneously: known ASN, no browsing history, high request velocity, and consistent TLS client hello patterns.

Residential IPs are harder to flag at the IP level, but that doesn’t make them automatically effective. A residential IP running 200 requests per minute to the same endpoint, maintaining identical header order, or missing expected cookie chains will still trigger behavioral detection. The IP type grants a higher baseline trust score; it doesn’t substitute for realistic request modeling.

The key metric here is the cost of a false negative versus a false positive on the target’s detection system. High-security targets (major e-commerce, financial platforms, institutional research portals) have invested significantly in IP intelligence databases. Data center subnets from popular hosting providers are often pre-flagged before a single request is made.

Performance Characteristics: Latency, Throughput, and Reliability

Data center proxies have deterministic performance profiles. Bandwidth is symmetric, latency is low (typically 5–30ms from major colocation hubs to target servers), and uptime is governed by SLA-backed infrastructure. For operations requiring high-throughput, time-sensitive data collection – financial data, real-time price monitoring, news aggregation – this predictability has genuine value.

Residential proxies introduce variability that comes with their architecture. You’re routing through a device owned by an end user, whose connection speed, device load, and ISP conditions affect your tunnel. Median latency for residential proxies is typically 100–400ms, with higher variance. Peer rotation means you may cycle through a 10Mbps fiber connection and a congested DSL line in the same session.

For the majority of data collection tasks, residential latency is acceptable. If you’re scraping product pages, collecting SERP data, or verifying ad placements, an extra 200ms per request is negligible at reasonable concurrency levels. Where it matters is in latency-sensitive pipelines where request queuing compounds delays into meaningful throughput constraints.

Data Center vs Residential Proxies

| Attribute | Data Center Proxies | Residential Proxies |

| Typical Latency | 5–30ms (colocation) | 100–400ms (ISP-dependent) |

| ASN Classification | Hosting/Cloud AS | Consumer ISP AS |

| Detection Risk (High-Security Targets) | High – pre-flagged subnets common | Low–Medium – depends on IP history |

| Throughput Consistency | High – SLA-backed infrastructure | Variable – peer connection dependent |

| Cost per IP | Lower ($0.67–$1.87/month) | Higher (residential pool pricing) |

| IP Pool Size | Smaller – fixed datacenter blocks | Larger – millions of rotating IPs |

| Best For | SEO monitoring, performance testing, low-security scraping | Ad verification, market research on high-security targets |

Cost Analysis: What You’re Actually Paying For

Price per IP is a misleading metric when comparing proxy types. The operative question is cost per successful request, which requires factoring in block rate, retry overhead, and pool burn rate.

Data center proxies are cheaper per IP but may require larger pool rotation for high-security targets. If you’re scraping a target with aggressive hosting-ASN blocking, you might burn through IPs faster than with a smaller residential pool. The economics flip: a $0.67/month shared IPv4 proxy that generates 40% block rates on your primary target costs more in infrastructure and retry logic than a residential proxy that completes the same requests on the first attempt.

Residential proxies justify their higher cost when the alternative is sophisticated retry logic, CAPTCHA handling overhead, or IP ban management at scale. For targets that actively fingerprint hosting IPs, the total operational cost often favors residential infrastructure despite the higher nominal price per IP.

One practical approach is tiered proxy architecture: data center IPs for the majority of operations (public APIs, low-security data sources, internal testing), with residential pools reserved for targets that require higher authenticity signals. This hybrid model optimizes cost while maintaining coverage across different target risk profiles.

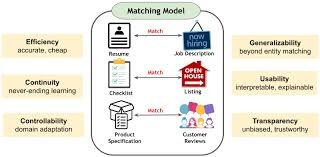

Use Case Mapping: Matching Proxy Type to Task Requirements

Data Collection and Market Research

For data collection at scale, target behavior drives the selection more than any other factor. Public data sources – open government databases, academic repositories, lightly protected e-commerce catalogs – work reliably with data center proxies. High-volume crawling of news aggregators, public pricing data, or non-authenticated endpoints is well within data center proxy capabilities at a fraction of the cost.

Market intelligence on major retailers, competitive pricing from platforms with aggressive bot mitigation, or structured data extraction from sites using Cloudflare or Akamai challenges warrants residential proxies. The

Choosing between these approaches often comes down to the specific target’s defenses. Our detailed breakdown of proxy selection strategies for web scraping covers how to evaluate target risk and match it to the right infrastructure before you commit to a pool purchase.

Ad Verification

Ad verification requires checking how ads render for real users in specific geographic markets. Data center IPs will often receive modified ad serving – platforms actively filter what they show to traffic from known scraper infrastructure. Residential proxies are operationally necessary here: they replicate the actual user-facing experience, including localized ad auctions, creative variations, and landing page behavior.

This is one of the clearest cases where residential proxies aren’t a “nice to have” but a technical requirement. Any ad verification system built on data center IPs will produce data that doesn’t reflect real user experience.

SEO Monitoring and SERP Tracking

Search engine ranking data collection sits in an interesting middle ground. Major search engines actively block known data center subnets, but the detection pressure has driven residential proxy usage to near-universal adoption in professional SEO tooling. For accurate rank tracking across different geographic locations, residential proxies with reliable geolocation are the standard.

Data center proxies remain viable for lower-frequency rank checks, long-tail keyword monitoring, or cases where you already have a relationship with a SERP API that handles IP management internally.

Performance Testing and Automation

Load testing, automation pipelines, and API performance validation are ideal for data center proxies. You need consistent throughput, low latency, and deterministic behavior – not residential-level authenticity. Running a 500-concurrent-connection load test through residential peers introduces noise from variable connection quality that obscures the actual performance data you’re trying to capture.

IP Reputation and Pool Quality: The Hidden Variable

The proxy type distinction becomes less useful when the actual IP pool quality is poor. A residential IP that has been heavily used for automation will carry negative reputation signals regardless of its ASN classification. IP reputation databases maintain behavioral histories, and IPs with high prior abuse rates get flagged independent of whether they originate from a consumer ISP or a hosting provider.

Pool freshness matters significantly for residential proxies in particular. Providers maintaining large pools through ethical peer networks have higher proportions of clean IPs than providers recycling heavily used inventory. When evaluating a residential proxy provider, turnover rate, pool size relative to customer base, and how the provider sources IPs are all due diligence items that directly affect success rates on hardened targets.

For operations requiring consistent results across both proxy types, working with a provider that maintains rigorous IP quality standards across its entire catalog – from shared IPv4 to premium residential – reduces the operational overhead of managing IP health at the application layer.Proxys.io offers both data center and residential proxy infrastructure with individual IP allocation, ensuring you’re not sharing pool reputation with other users’ traffic patterns.

Geographic Coverage: Where the Two Types Diverge

Data center proxy coverage tends to cluster around major internet exchange points – primary data centers in Frankfurt, Amsterdam, London, New York, Dallas, and similar hubs. Getting data center coverage in emerging markets or smaller geographic regions requires working with providers that have deliberately built out those locations.

Residential proxy networks derive their geographic distribution from their peer user base. Large residential networks have genuinely global footprints because they mirror actual consumer ISP distribution – if a country has significant internet penetration, there are residential IPs available. This makes residential proxies the practical choice for localized research in markets where data center infrastructure is sparse.

Use Case Decision Matrix

| Use Case | DC Proxy | Residential | Primary Reason |

| Public API data collection | ✓ Preferred | Optional | Low detection risk, cost efficiency |

| Ad verification | Not recommended | ✓ Required | Must replicate real user experience |

| SEO / SERP monitoring | Low accuracy | ✓ Preferred | Search engines block hosting ASNs |

| Performance / load testing | ✓ Preferred | Adds noise | Consistent throughput required |

| E-commerce market research (high-security) | High block rate | ✓ Preferred | Target actively filters hosting IPs |

| Internal automation / testing pipelines | ✓ Preferred | Unnecessary cost | Authenticity signals not needed |

| Localized research in emerging markets | Limited coverage | ✓ Preferred | Broader geographic distribution |

When Neither Type Is the Bottleneck

A persistent misconception is that switching proxy types solves block rate problems. Often it doesn’t, because the block is caused by request behavior rather than IP classification. Uniform request intervals, identical User-Agent strings across thousands of requests, missing Referer headers, or consistent geographic-behavioral mismatches will produce high failure rates regardless of whether you’re using data center or residential IPs.

Before investing in a different proxy tier, audit your request fingerprinting. Are you rotating User-Agent strings realistically? Are your TLS parameters matching what a browser would send? Are your request intervals following a natural distribution rather than a fixed clock? These behavioral signals are evaluated in combination with IP classification – fixing IP type while ignoring behavioral fingerprinting yields marginal improvement at best.

Rotating header profiles, implementing realistic session state management (cookie handling, referrer chains), and matching request cadence to plausible human behavior are all implementation-level improvements that amplify the effectiveness of either proxy type.

Making the Final Infrastructure Decision

The selection framework is straightforward when applied systematically. Start with target analysis: what is the ASN filter posture of your primary data sources? Targets that actively block hosting ASNs require residential infrastructure from the start. Targets with minimal bot mitigation can be served cost-effectively with data center proxies.

Layer in volume and cost requirements. If your operation runs hundreds of thousands of requests per day against multiple targets with different risk profiles, a tiered architecture separating low-security and high-security targets across different proxy types is the operationally efficient choice. Single-target operations with clear threat model requirements can commit to one type without the management overhead of mixed pools.

Finally, assess provider quality independently of proxy type. Pool freshness, geographic coverage depth, protocol support (HTTP/HTTPS/SOCKS5), and the allocation model (individual vs. shared IP assignment) affect operational reliability more than the data center vs. residential distinction in many real-world deployments.

Conclusion

Data center and residential proxies are not competing products – they’re complementary infrastructure components with different performance envelopes and cost profiles. Data center proxies deliver speed, consistency, and cost efficiency for targets where hosting-ASN classification isn’t a disqualifying factor. Residential proxies deliver authenticity signals and broader geographic distribution for targets where IP origin determines whether your request is served or rejected.

The engineer’s job is to match those characteristics to the actual operational requirements, not to chase the higher-tier product by default. A well-configured data center proxy setup will outperform a poorly implemented residential proxy deployment on almost any metric. Proxy type is one input into infrastructure quality – not a shortcut around the need for sound implementation.